By Sébastien Féré.

Over the last twenty years, Open Source Software components – powered by a vibrant Open Source community – have radically changed the way we develop software. A "proxy" instance of a Binary Repository can do much more than just caching artifacts. This article details some opportunities from security best practices to advanced deployment techniques that can be applied to containers and Kubernetes.

Back in 2000’s, every company was developing its libraries and applicative frameworks mostly in-house, with sometimes poor outcomes in terms of quality and performance. In the Java ecosystem, Log4j was first released in 2001, Spring framework in 2003, and then literally we witnessed an avalanche of libraries for all languages and all purposes.

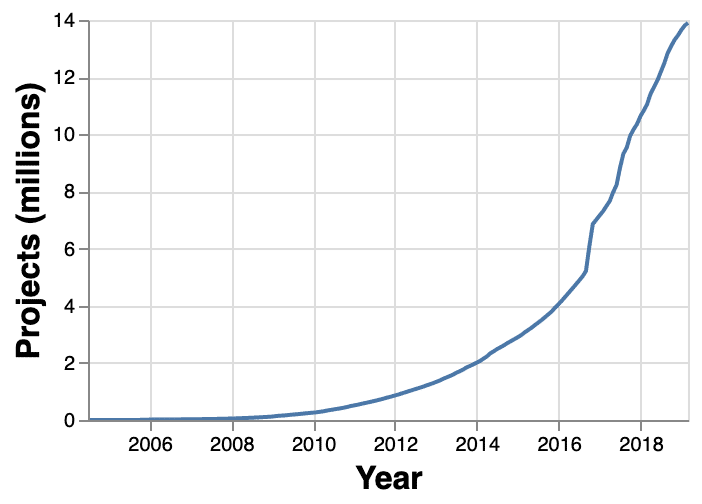

Over the years, the number of artifacts embedded in our applications kept growing, in the same way the number of projects hosted in public repositories increased exponentially.

source: https://mvnrepository.com/repos

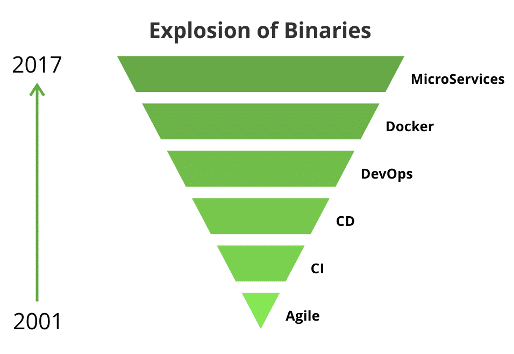

Nowadays, even the simplest demo applications embeds dozens of libraries, either directly or in a transitive way. The advent of DevOps practices such as Infrastructure-as-Code, Containers and Microservices made the quantity of binaries and packages tenfold.

source: https://dzone.com/articles/use-a-binary-repository-manager-and-keep-up-with-d

It is very common for a developer machine or a CI executor to hold Gigabytes of binary data, just for cached libraries coming from central public repositories such as Maven Central, Docker Hub, the NPM public Registry, the Python Package Index, etc. For substantial teams, the download of these artifacts can represent a significant load on the Internet bandwidth and precious seconds for your CI pipelines as well.

Caching artifacts within the Enterprise has therefore become a widely used practise, from the early days of Continuous Integration. The two enterprise options to achieve artifact caching for all common programming languages are JFrog Artifactory and Sonatype Nexus.

Caching artifacts can save your day in many aspects…

Which company has not suffered a loss of Internet access for a couple of hours? Sometimes, the problem comes from outside: public repositories can suffer outages as well. For instance, Quay.io experienced serious difficulties May 19, 2020 from 3am to 11pm EDT. From a more predictable standpoint, the move of the Internet to "HTTPS everywhere" forced people to adjust repository settings.

In some cases, a single library missing can break everything! Remember the left-pad chaos in 2016 caused by a single developer, who unpublished his packages, impacting – at scale – thousands of projects…

More recently, Docker Hub announced an updated Terms of Service, with both a:

While these are fully understandable to maintain a free and high-quality service for most users, the rate limit can impact companies that run workloads with containers. Deploying application environments many times per day with database images (PostgreSQL, Redis, etc.), messaging images (Kafka, etc.) can quickly exceed the rate limit.

Again, if you are using a Proxy instance for your Docker images coming from the Internet, you don’t have to worry all…

If you only need to proxy Docker images and other cloud-native artifacts (Helm charts, OPAs, Singularity, etc.), you can now do it with the CNCF-graduated Harbor registry. The recent 2.1 version includes the Proxy Cache feature, enabling Harbor to act as pull through cache registries such as DockerHub and Harbor.

Your company can do much more than just caching artifacts with a proxy instance for your binaries. You can help your Security team to sleep better at night by applying Shift-Left principles, such as Vulnerability scanning on open source (OSS) components.

Let’s first take a few steps back and think about the amount of Open Source code within our Business Applications – 80%, 90%, more ? I guess we should think in these terms about the ratio of Open Source vs. in-house code in our applications. At some point, this is great to be able to give more focus on the User Experience or Business Features rather than focusing on transaction management or interoperability with external systems, etc. But on the other side of the coin, we indeed outsource technical concerns of our applications to the Open Source community. In most cases, the code written by the Community and then battle-tested on thousands of applications is much more reliable and efficient than any code written in-house in a proprietary approach… in most cases… and this is exactly where "trust" means everything!

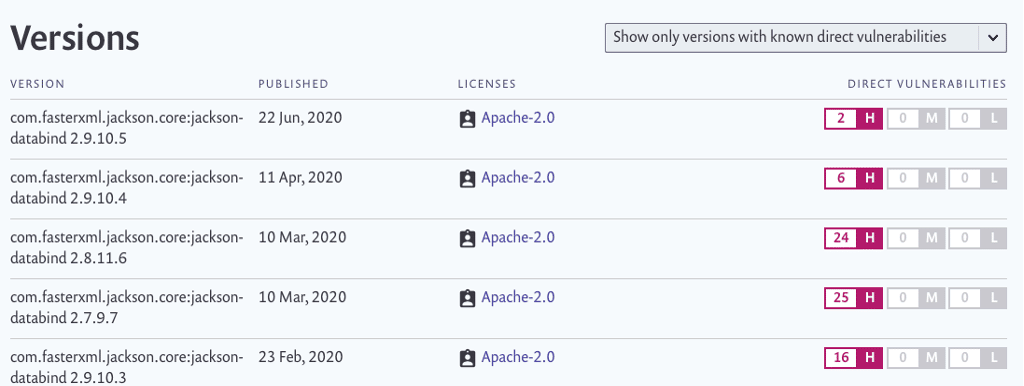

The example below taken from Snyk.io shows the evolution of High-rated vulnerabilities in the heavily used jackson-databind (fasterxml) library for JSON and REST APIs. The development team made great effort early this year to fix all vulnerabilities – recent 2.10.x and 2.11.x versions have no known vulnerability.

source: https://snyk.io/vuln/maven:com.fasterxml.jackson.core%3Ajackson-databind

For years, applications have been shipped to production with severe vulnerabilities. The inclusion of Security concerns within DevOps gave birth to the DevSecOps Manifesto and Build-Security-In approaches, leading to a growing awareness about vulnerabilities both in the Open Source community and within Organizations. For a few years, the strong involvement of Security companies towards the Open Source has made Open Source Software (OSS) components more and more secure. In the same time, security advisory boards have also flourished on the websites of most Software platforms and editors, as a matter of transparency.

One should understand that tracking down vulnerabilities in software assets cannot be performed manually as shown in the jackson-databind example, even for a single application. There are indeed a couple of tools to scan vulnerabilities within OSS components, including Docker images, among those:

Rules based on various risk factors can be applied to block the download or quarantine vulnerable artifacts, directly at the Proxy "source".

Some of you may already cache artifacts for some languages – Maven, NPM certainly being the most used – on the same instance on which you publish your private artifacts, proudly built in your CI pipelines.

From a security perspective mixing public and private artifacts on the same instance is definitely not the best choice.

A Proxy and a Private binary instance have very few in common:

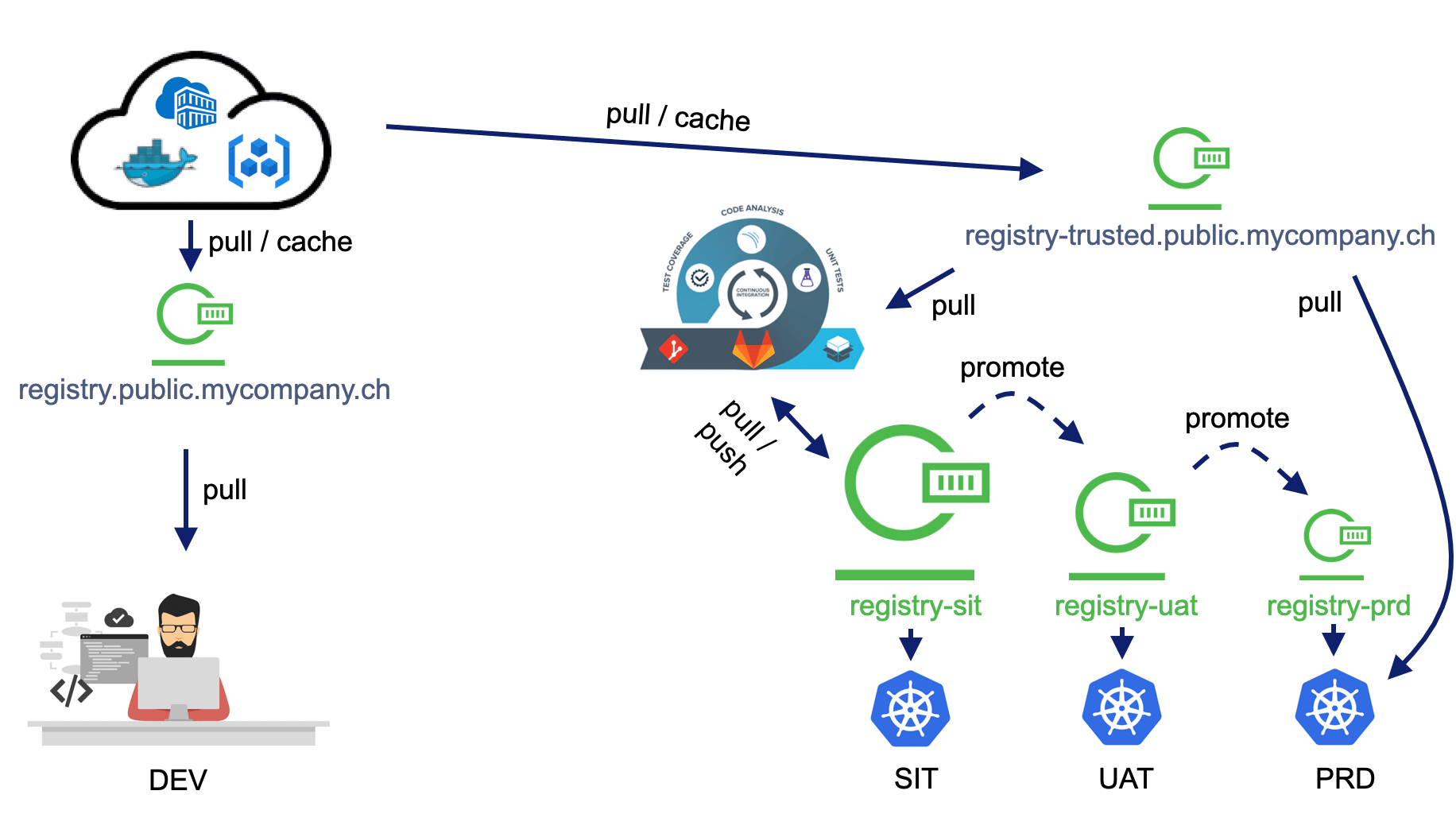

All of these considerations may bring your mind towards a diagram such as the one below:

A proxy instance for binaries should be a must-have for any company. With the right selection of tools and a relevant deployment setup, you can drastically increase both your independence with regards to public repositories / registries and the level of security of your apps… all of it without ruining your budget 😉.

Some advanced techniques can be used to: