Photo by Bharat Patil on Unsplash

Every day at Sokube we evangelize GitOps as a way to simplify, improve and accelerate digital operations, and we support our customers on this journey. With the abundance of technology bricks that now compose the "Cloud Native" landscape, something that years ago looked difficult and hazardous is now easily reduced to choosing the combination of products / platforms that best fits a use-case.

However, when it comes to keeping sensitive information confidential inside a GitOps approach, choices are neither multiple nor simple. With its reversible base64 encoding, Kubernetes Secrets has been the Achille’s heel of the platform regarding information security or confidentiality whether or not RBAC has been correctly configured. Native Kubernetes Secrets to store confidential or sensitive information should be purely prohibited on production systems, at least.

Couple of GitOps-friendly alternatives that preserve confidentiality have naturally emerged as workarounds: the first part of this article will be focusing on a short overview of what’s existing. While Hashicorp Vault remains a truly secure, feature-rich and widely adopted solution, we believe it is positioned rather as an enterprise-wide or cross-organizational solution for confidential information. In the context of GitOps however, it would distort the "git as single source of truth" principle. Finally, a pertinent deployment of HashiCorp Vault often requires the appropriate organizational changes as well as a solid budget for its operations.

In the second part of the article, we will focus on two lightweight solutions which provide exactly what is needed for GitOps without disrupting the organization, the project and the budgets : Bitnami Sealed Secrets and Soluto’s Kamus.

Most of our use-cases involve on-premise only installations, so while Amazon, Azure, and Google usually provide their secret management for their platform, we’ve focused primarily on the on-premise solutions, and we have evaluated:

| Solution | Category | Complexity | Maturity | Secrets Scope | Dependency | Note |

|---|---|---|---|---|---|---|

| Helm Secrets | On Premise | MEDIUM | Abandoned | Org | None | Abandoned, fork jkroepke/helm-secrets |

| Kamus | On Premise | LOW | Alpha | Service Account Cluster | None | Promising |

| External Secrets Operator | On Premise (needs a cloud KMS backend) | LOW | Alpha | Org | Needs a KMS backend behind | Very young, needs a KMS behind |

| aws-secret-operator | On Premise (needs a AWS SM backend) | LOW | Alpha | Org | Needs AWS SM behind | Needs AWS Secrets Manager |

| KSOPS | On Premise | LOW | Alpha | Org | SOPS Kustomize | Can integrate with ArgoCD |

| Kapitan with Tesoro | On Premise | LOW | Alpha | Org | Kapitan KMS backend | Nice but makes sense with kapitan only |

| Bitnami Sealed Secrets | On Premise | VERY LOW | Stable | Cluster | None | Simple but need to copy keys across clusters |

| Godaddy Kubernetes External Secrets | On Premise (needs a cloud KMS backend) | LOW | Stable | Org | Needs a KMS backend behind | Nice wrapper but needs a KMS behind |

| Hashicorp Vault | On Premise Hosted | HIGH | Stable | Org | None | Proven solution but cost of implementation/management is HIGH |

| Banzai Cloud Bank-Vaults | On Premise | MEDIUM | Stable | Org | Hashicorp Vault | Simplifies Vault but still requires Vault |

| Kustomize secret generator plugins | On Premise | HIGH | Stable | Org | Needs a service behind | Requires to develop a plugin and a service behind |

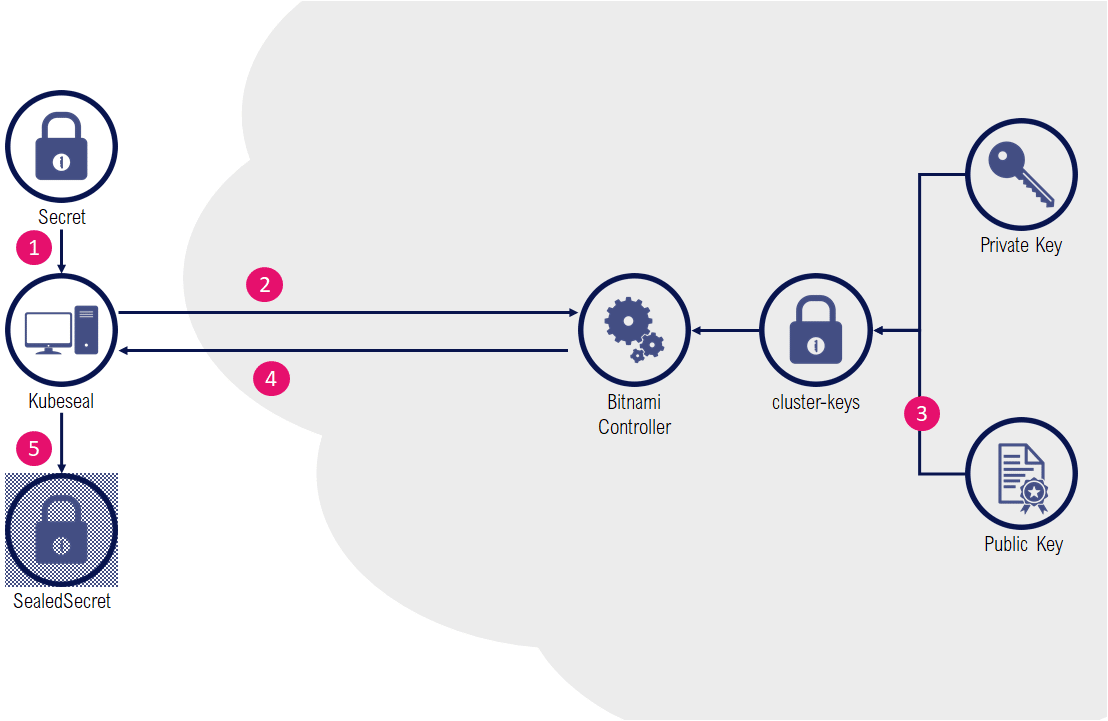

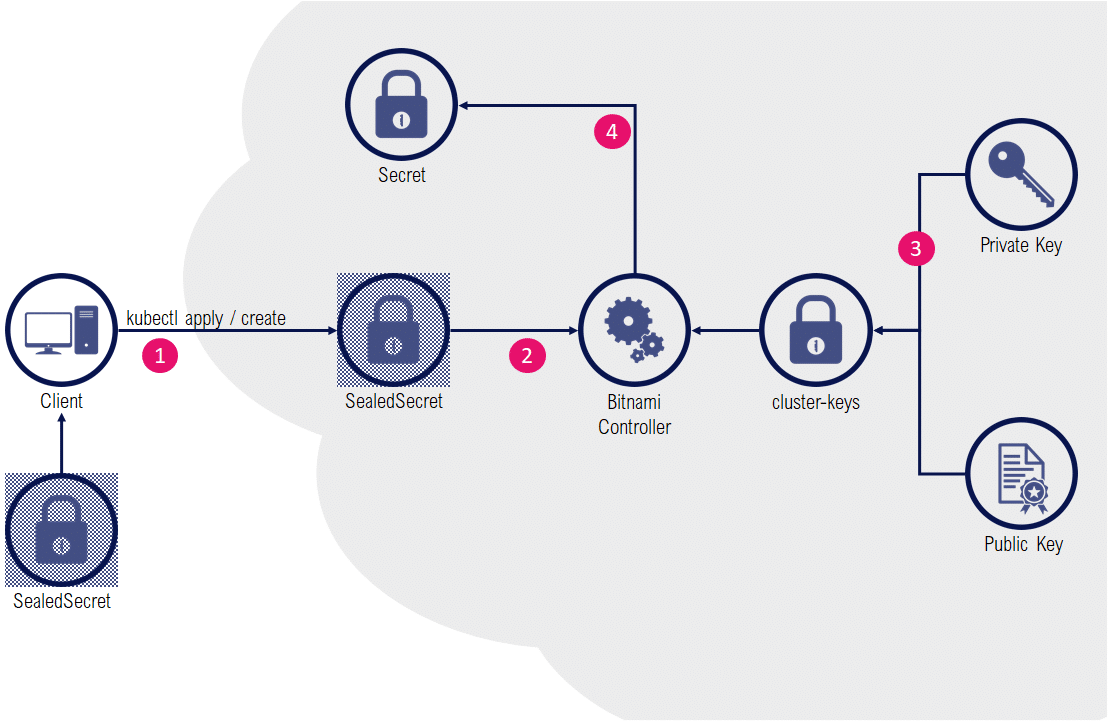

The Bitnami Sealed Secrets solution is based on a controller that runs in the target cluster, where a cluster-specific pair of private/public keys is generated.

The public key is used by a client binary (kubeseal) to live-encrypt data (in the form of custom resources named SealedSecret). This operation can also be done offline (without the need for a cluster with a bitnami controller) by using the controller certificate (public key) that can be downloaded from the cluster. The resulting resource is a SealedSecret that is safe to store inside a git repository.

The controller detects the presence of SealedSecret resources and automatically decrypts the content in an equivalent standard Secret resource.

Installation of the solution is relatively easy:

$ kubectl apply -f https://github.com/bitnami-labs/sealed-secrets/releases/download/v0.13.1/controller.yaml

The client tool (kubeseal) to interact with the controller inside the target cluster is a standalone binary that can be downloaded separately:

$ wget https://github.com/bitnami-labs/sealed-secrets/releases/download/v0.13.1/kubeseal-linux-amd64 -O kubeseal

We will use a specific namespace:

$ kubectl create namespace my-namespace

namespace/my-namespace createdBitnami’s kubeseal can be used as a pipe command, and when it’s combined with the dry-runs capabilities of kubectl, we can generate sealed secrets without even intermediate resources stored locally as files:

$ kubectl create secret generic db-credentials

-n my-namespace

--from-literal=DBUSER=mydbuser

--from-literal=DBPWD=highlysecret

--dry-run=client

-o yaml

| kubeseal -o yaml > db-credentials-sealed-secret.yamlThe resulting SealedSecret can be stored safely inside a git repository:

apiVersion: bitnami.com/v1alpha1

kind: SealedSecret

metadata:

creationTimestamp: null

name: db-credentials

namespace: my-namespace

spec:

encryptedData:

DBPWD: <ENCRYPTED DATA>

DBUSER: <ENCRYPTED DATA>

template:

metadata:

creationTimestamp: null

name: db-credentials

namespace: my-namespaceApplying the resource:

$ kubectl apply -f db-credentials-sealed-secret.yamlChecking the resource:

$ kubectl get sealedsecrets -n my-namespace db-credentials

NAME AGE

db-credentials 2m3s

$ kubectl get events -n my-namespace

LAST SEEN TYPE REASON OBJECT MESSAGE

2m9s Normal Unsealed sealedsecret/db-credentials SealedSecret unsealed successfully

Checking the equivalent Secret (and data) that has been generated by the controller:

$ kubectl get secrets -n my-namespace

NAME TYPE DATA AGE

...

db-credentials Opaque 2 3m18s

$ kubectl get secrets -n my-namespace db-credentials -o jsonpath='{.data.DBUSER}' | base64 --decode

mydbuser

$ kubectl get secrets -n my-namespace db-credentials -o jsonpath='{.data.DBPWD}' | base64 --decode

highlysecretImportant to know: Each sealed secret is encrypted with its own random asymmetric key that is specific to the sealed secret name and namespace. Copy-pasting the encrypted data for another secret or in another namespace won’t work.

By default, SealedSecrets generated for a cluster won’t work with another cluster (the installation of the Bitnami Controller creates a new pair of public/private keys), which could make the overall GitOps staging operations cumbersome (each environment would need to have its custom SealedSecrets). One solution is to export/import the same set of key pairs from one cluster to another. However a compromised key on a cluster would also grant access to all sealed secrets on all clusters with the same key pair.

➕ Simplicity of setup and usage

➕ Mature and well maintained solution

➖ Regular Secrets are still exposed

➖ No usage outside Secrets (e.g. ConfigMap)

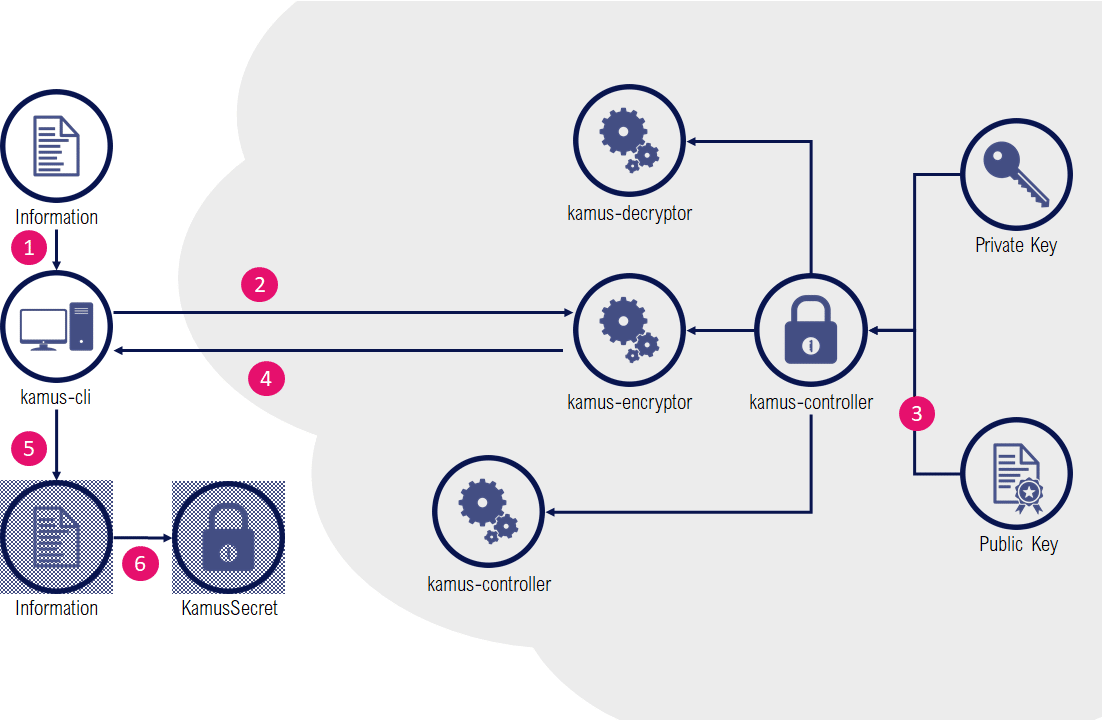

The Kamus solution offers two different mechanisms for secrets generation. One that is similar to Bitnami Sealed Secrets, another which is a true zero-trust secrets management solution.

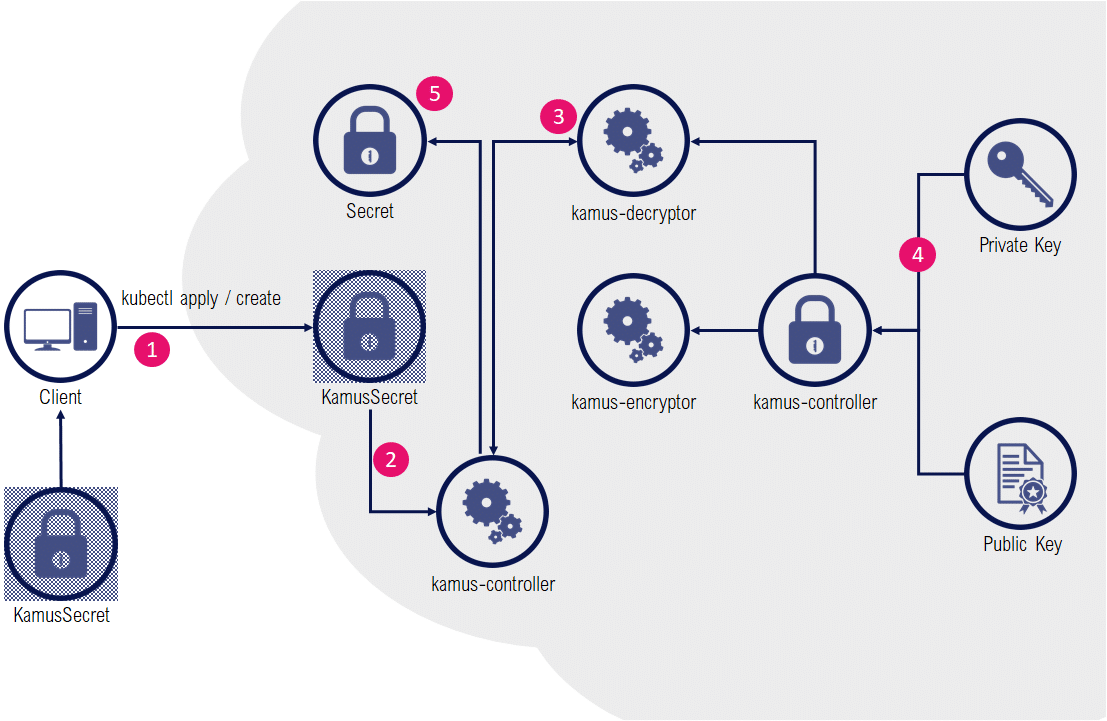

KamusSecret tend to mimic the Bitnami Sealed Secrets : a controller inside the cluster detects KamusSecrets and provide a decrypted version as a Secret.

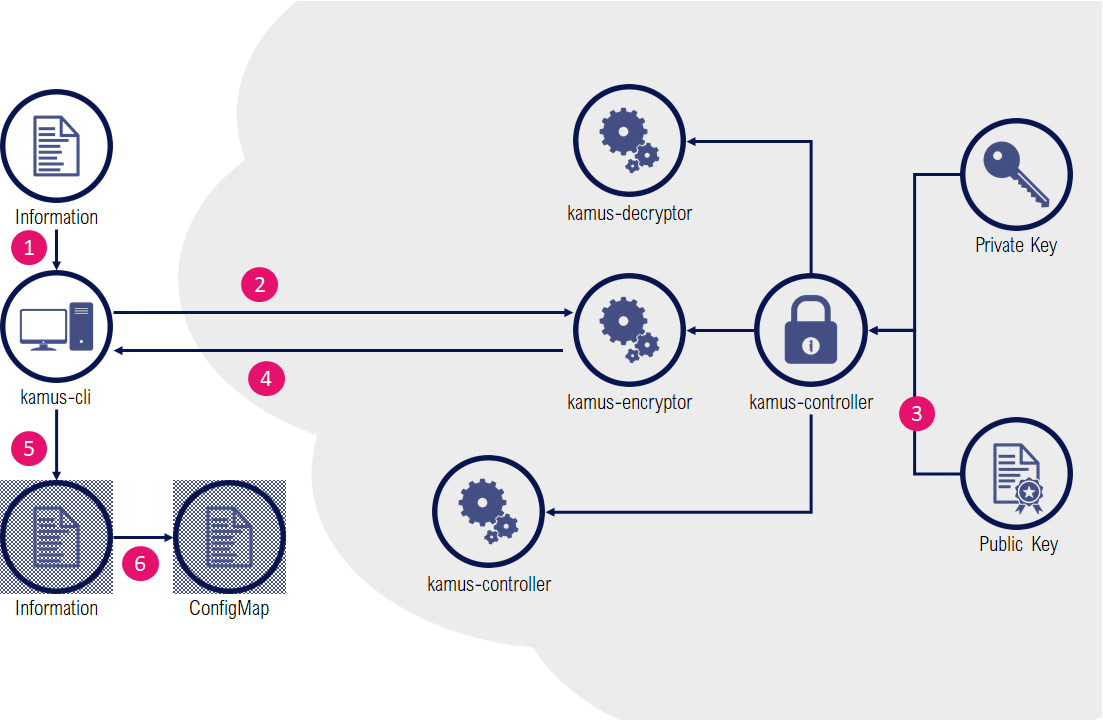

The encoding part is a bit more tedious, as the kamus-client doesn’t support passing a whole Secret resource but rather works as a raw encryption service: individual items need to be separately encrypted with the client, and aggregated manually inside an "envelope" KamusSecret definition.

As with Bitnami, the controller is responsible to decrypt a KamusSecret as a regular Secret.

Contrary to a Bitnami Sealed Secret or a KamusSecret, Kamus zero-trust mode has the huge advantage of never revealing the decrypted data except for the intended service at runtime.

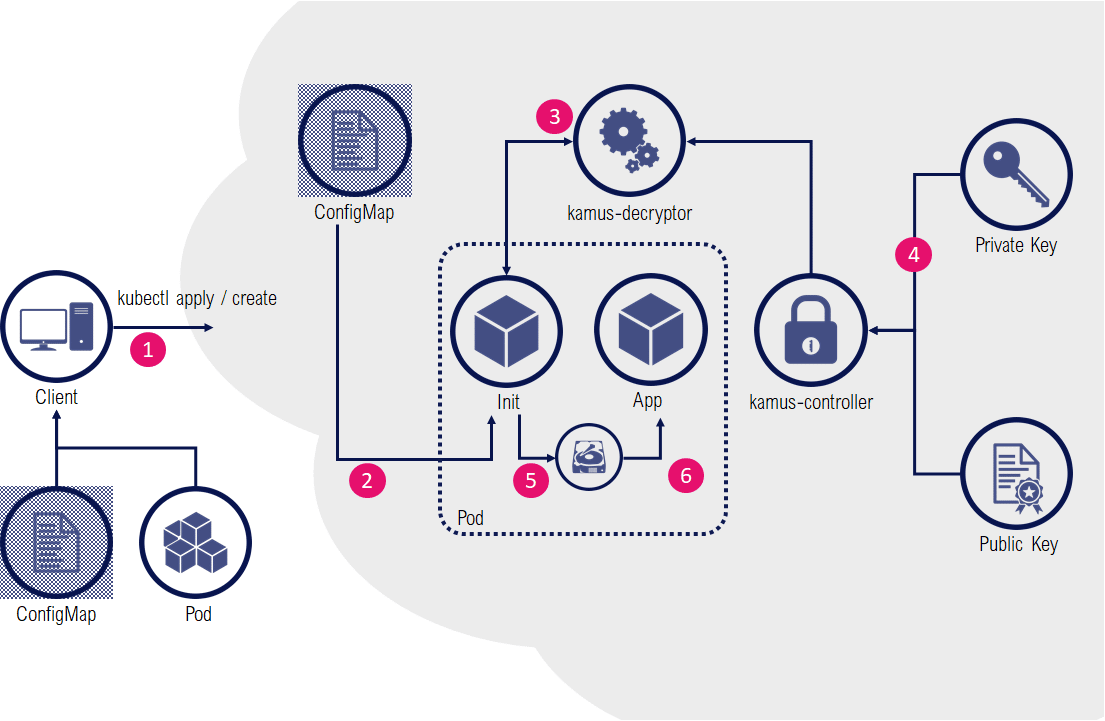

The encryption part of the zero-trust secrets mode is very similar to the previous example: instead of wrapping encoded data in a KamusSecret, a ConfigMap will be used to store secrets as encrypted files in a mounted ConfigMap volume.

For consumption of secrets, an initContainer is invoking the kamus-decryptor service and decrypted results are stored in an ephemeral emptyDir volume accessible only for the application container in the pod.

Installation of the Controller and its CustomResourcesDefinitions is relatively easy:

$ helm repo add soluto https://charts.soluto.io

$ key=$(openssl rand -base64 32 | tr -d 'n')

$ helm upgrade --install kamus soluto/kamus --set keyManagement.AES.key=$keyWe’ve added here the random key generation as the solution comes (dangerously) with a predefined key. Also, contrary to Bitnami, the solution installs its workloads in the default namespace so we recommend tweaking the helm release accordingly. Finally, replicas in the encryptor/decryptor deployments are set to 2 by default, which might be unnecessary.

The Kamus client is unfortunately an npm package (a debatable choice that forces users to install npm package manager). A Docker container image seems to be available, however it’s not mentioned on Kamus website so we have chosen to stick to the documented approach:

$ npm install -g @soluto-asurion/kamus-cliWe will use a specific namespace and a specific service account:

$ kubectl create namespace my-namespace

namespace/my-namespace created

$ kubectl create serviceaccount dummy-sa -n my-namespace

serviceaccount/dummy-sa createdThe kamus-cli also needs an exposed encryptor pod as an URL:

$ export POD_NAME=$(kubectl get pods --namespace default -l "app=kamus,release=kamus,component=encryptor" -o jsonpath="{.items[0].metadata.name}")

$ kubectl port-forward $POD_NAME 8880:9999 &First scenario, setting up a KamusSecret containing our sensitive information:

$ kamus-cli encrypt --secret mydbuser --service-account dummy-sa --namespace my-namespace --allow-insecure-url --kamus-url http://localhost:8880

[info kamus-cli]: Encryption started...

[info kamus-cli]: service account: dummy-sa

[info kamus-cli]: namespace: my-namespace

[warn kamus-cli]: Auth options were not provided, will try to encrypt without authentication to kamus

Handling connection for 8880

[info kamus-cli]: Successfully encrypted data to dummy-sa service account in my-namespace namespace

[info kamus-cli]: Encrypted data:

0XZm9vDLVvjNGCgeFZqNnA==:GoaTZCXkwyfoBNRwjtgsOQ==

$ kamus-cli encrypt --secret highlysecret --service-account dummy-sa --namespace my-nymespace --allow-insecure-url --kamus-url http://localhost:8880

[info kamus-cli]: Encryption started...

[info kamus-cli]: service account: dummy-sa

[info kamus-cli]: namespace: my-nymespace

[warn kamus-cli]: Auth options were not provided, will try to encrypt without authentication to kamus

Handling connection for 8880

[info kamus-cli]: Successfully encrypted data to dummy-sa service account in my-nymespace namespace

[info kamus-cli]: Encrypted data:

NlHRuvaqFlip5mDP6d5cDw==:OVW3C2hY/wZW6o2I+YjL5Q==Then we need to create a KamusSecret envelope object based on the following structure (please note that documentation refers to a data section which doesn’t seem to be relevant or working, so we’ve used the stringData syntax).

$ cat <<EOF >kamus.yaml

apiVersion: "soluto.com/v1alpha2"

kind: KamusSecret

metadata:

name: db-credentials

namespace: my-namespace

type: Generic

stringData:

DBUSER: 0XZm9vDLVvjNGCgeFZqNnA==:GoaTZCXkwyfoBNRwjtgsOQ==

DBPWD: NlHRuvaqFlip5mDP6d5cDw==:OVW3C2hY/wZW6o2I+YjL5Q==

serviceAccount: dummy-sa

EOF

```

This KamusSecret resource can now be safely stored under a source code versioning system like git.

Apply the new resource:

$ kubectl apply -f kamus.yaml

Check the resources created:

$ kubectl get kamussecrets.soluto.com -n my-namespace

NAME AGE

db-credentials 20s

$ kubectl get secrets -n my-namespace

NAME TYPE DATA AGE

default-token-sc4ds kubernetes.io/service-account-token 3 9m3s

db-credentials Generic 2 53s

Verify the decryption:

$ kubectl get secrets -n my-namespace db-credentials -o jsonpath='{.data.DBUSER}’ | base64 –decode

mydbuser

$ kubectl get secrets -n my-namespace db-credentials -o jsonpath='{.data.DBPWD}’ | base64 –decode

highlysecret

### Zero-Trust Secrets Usage

In this mode, we will reuse the encrypted data of the first example, but this time we'll use a ConfigMap that we later will use as a mounted volume of encrypted files (DBUSER and DBPWD)

$ cat <

apiVersion: v1

kind: ConfigMap

metadata:

name: kamus-encrypted-secrets-cm

namespace: my-namespace

data:

DBUSER: 0XZm9vDLVvjNGCgeFZqNnA==:GoaTZCXkwyfoBNRwjtgsOQ==

DBPWD: NlHRuvaqFlip5mDP6d5cDw==:OVW3C2hY/wZW6o2I+YjL5Q==

EOF

This config map can be safely stored under a git repository.

Next, we'll use the initContainer from Kamus solution which will invoke the decryption services on mounted files, and results will be stored on a common emptyDir volume for our "application" container to use (our application is a sleepy container that we'll consult later on)

$ cat <

apiVersion: v1

kind: Pod

metadata:

namespace: my-namespace

name: kamus-zero-trust-example

spec:

serviceAccountName: dummy-sa

automountServiceAccountToken: true

initContainers:

Create our application pod:

$ kubectl create -f kamus-pod.yaml

pod/kamus-zero-trust-example created

And check the decrypted /secrets/config.json file available for our application container:

$ kubectl exec -n my-namespace kamus-zero-trust-example app -- more /secrets/config.json

{

"DBPWD":"highlysecret",

"DBUSER":"mydbuser"

}Please note that:

➕ Multiple operating modes

➕ Working in zero-trust environments

➕ Can use a true KMS backend

➖ Confusing documentation

➖ Still early stages

➖ Its npm based client

While Kamus offers more options than Bitnami, it requires substantially more efforts for the most common scenarios. The documentation coming with the solution is also sometimes confusing, although there have been significant efforts on clarity since the early releases. Some customers and audience will be particularly receptive to its ability to operate in a zero-trust environment despite it requires a significant setup and injection of init containers in workloads. It also can use sophisticated and cryptographic secure vault solutions as a backend, which is also a strong argument when this is a requirement.

In most common scenarios however, Bitnami Sealed Secrets remains particularly effective, compact and straightforward to operate. It’s been around since quite some time now, is well maintained and is becoming a de-facto answer for lightweight secrets management for GitOps workflows for very good reasons.

As usual, there is no best all-around alternative, but there’s always the best answer to a particular use-case with some requirements and constraints from the environment. We hope this article will help you selecting the secrets solution for your GitOps scenarios.